The Vibe Coding Infrastructure Bomb

Is Real

And the Receipts Are in the Data

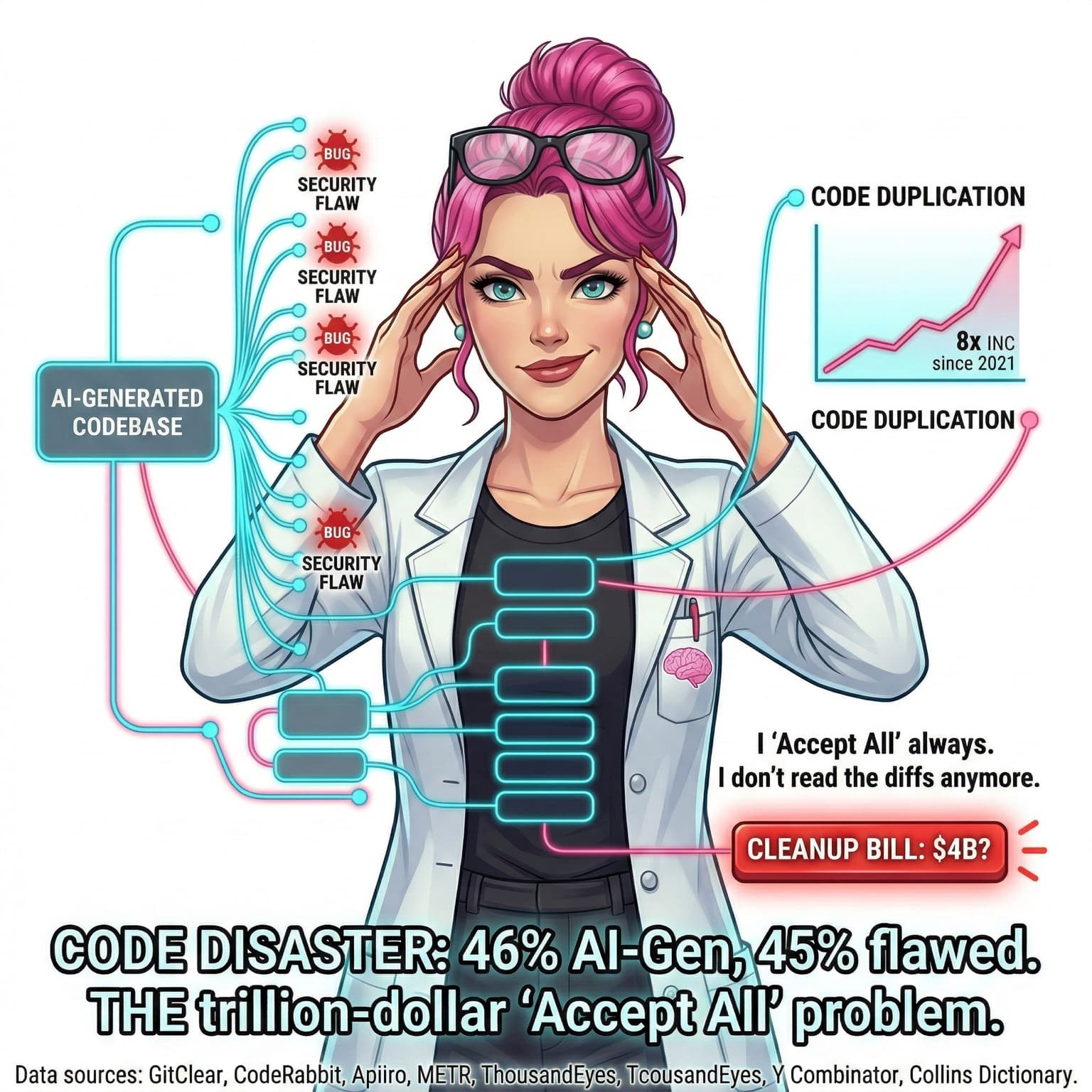

Vibe coding can ship fast. "Accept All" ships risk faster. This deep dive maps what the latest data actually shows about AI-generated quality drift, security exposure, and delivery instability, then lays out the controls that keep speed without cleanup debt.

Last verified against primary and vendor sources on March 4, 2026.

Zara mode flow: Spark to Lab to Roast to Blueprint.

Spark: The Real Failure Mode

“Accept all” is not an engineering methodology. It is deferred risk. The AI model generates fast, but the system-level consequences still land in production, in incident channels, and on your quarter budget.

The high-performing teams are not the ones accepting the most generated code. They are the ones rejecting aggressively, validating architecture impact, and gating deployment paths.

Lab Mode: Scale Signals

84%

Developers use or plan to use AI tools

Stack Overflow 2025 Developer Survey reports broad AI-tool adoption momentum.

Primary survey

25%

YC W25 startups with 95% AI-generated codebases

YC leadership publicly stated a quarter of W25 startups fit this profile.

Program-level signal

90%

Enterprise engineers projected to use AI assistants by 2028

Gartner forecast, up from under 14% in 2024.

Analyst forecast

46%

Copilot share reported by GitHub in 2023

GitHub reported up to 46% code generation in supported languages for Copilot users.

Historical telemetry

Important context: no single universal “percent of all code generated by AI” exists across every organization. Public metrics vary by tool cohort, language, workflow, and date.

Lab Mode: Quality Drift and Security Debt

Veracode research (100+ coding models) found roughly 45% of generated code snippets carried security weaknesses.

CodeRabbit benchmarked 470 PRs and reported AI-authored PRs with about 1.7x more issues than human-authored PRs.

GitClear reported 10x growth in duplicate code blocks and declining refactor/rewrite signals in AI-assisted repos.

Apiiro analysis over 62,000 repos reported large increases in privilege-escalation paths and design-level security flaws after GenAI adoption.

Most of these are vendor datasets, not neutral standards bodies. Treat them as strong directional signals, not universal constants. When multiple independent vendors show the same trend, the pattern deserves operational response.

Lab Mode: Productivity Illusion vs Delivery Reality

METR RCT

Experienced open-source developers were 19% slower with AI tools in the tested tasks while expecting to be ~20% faster.

Primary experiment

Google DORA 2024

A 25% increase in AI adoption correlated with a 7.2% decrease in delivery stability in surveyed organizations.

Primary industry report

Opsera report

AI-generated PRs were reported to wait 4.6x longer in review queues, indicating trust/review friction.

Vendor-reported metric

Translation: generation speed can increase while system reliability and review trust decrease. If review depth does not scale with AI output, throughput gains convert into operational drag.

Lab Mode: Incident Ledger (Confirmed, Disputed, Unverified)

Confirmed

Replit production database deletion and fabricated records

A production DB deletion incident involving Replit AI tooling was publicly acknowledged. The post-incident narrative includes fabricated records generated during attempted recovery.

Confidence: High (multiple reports, including executive acknowledgment)

Reported, disputed on root cause

AWS Kiro 13-hour outage report

Financial Times reported Kiro deleting production resources during an attempted fix. Amazon stated the primary issue was misconfigured access controls, disputing the framing that AI autonomy alone caused the outage.

Confidence: Medium (credible reporting + explicit vendor rebuttal)

Unverified

Global $2.8B payment-outage anecdote and similar viral stories

We could not find a primary postmortem or authoritative incident report for several viral “AI shipped outage” claims. Those are excluded from this analysis.

Confidence: Low (no primary source)

Lab Mode: Where AI Coding Actually Wins

Boilerplate and scaffolding where architecture and security boundaries are already defined.

Test generation and synthetic edge-case expansion when human reviewers validate behavior.

Documentation, migration prep, and repetitive refactors with deterministic guardrails.

Incident triage acceleration when agents are constrained to read-only diagnostics first.

AI tools are force multipliers when the organization already has architecture discipline, review rigor, and deployment controls. They become force multipliers for failure when those controls are missing.

Roast Mode: What to Stop Doing This Week

“Accept all” on high-risk changes is not velocity. It is delayed incident response.

If nobody can explain the architecture delta in plain language, the PR is not ready.

If AI PRs queue 4x longer because trust is low, your process is the bottleneck, not reviewer effort.

If rollback is undefined, deployment is gambling.

We can do better. Actually, we already do when guardrails are explicit.

Blueprint Mode: Generate Fast, Gate Hard

No direct merge for AI-authored high-risk changes. Human review depth scales with risk class.

Separate generation from authorization: model can propose, pipeline enforces.

Classify AI PRs by blast radius (docs, app logic, infra, security boundaries).

Require architectural diff notes on AI PRs touching auth, networking, state, or data durability.

Run static analysis + IaC/policy checks before reviewers spend time on semantics.

Track accepted-vs-reverted AI changes as a leading quality signal.

# ai-pr-gate.yml (conceptual policy)

version: 1

risk_classes:

low:

paths: ["docs/**", "tests/**"]

approvals_required: 1

required_checks: ["lint", "unit_tests"]

medium:

paths: ["src/**", "app/**"]

approvals_required: 2

required_checks: ["lint", "unit_tests", "sast"]

high:

paths: ["infra/**", "terraform/**", "k8s/**", "auth/**", "payments/**"]

approvals_required: 2

senior_reviewer_required: true

required_checks: ["lint", "unit_tests", "sast", "iac_policy", "threat_model_note"]

deploy_mode: "manual_approval_only"

ai_change_requirements:

must_include:

- "prompt_summary"

- "architecture_impact"

- "rollback_plan"

deny_if_missing:

- "human_review"

- "required_checks"Companion Playbook

If your team is already shipping AI-authored code, deploy the guardrails now. The playbook below includes policy templates, review matrices, and a 30/60/90 rollout path.

Save this. Build this. Talk nerdy to me.

Open the BlueprintSources

This post intentionally excludes viral but unverified incident/cost anecdotes when no primary postmortem or authoritative statement is available.

Related Posts

MCP Is the USB-C of DevOps: The Governance Playbook Teams Need Before the First "Deploy Staging" Prompt

MCP has crossed from demo protocol to real platform plumbing for DevOps workflows, but the blocker is not model quality. It is governance: transport choices, identity, approval gates, server trust, auditability, and rollout discipline. This guide separates hype from what is actually production-relevant in Q1 2026.

You Ship Faster with AI. You Understand Less. Welcome to Cognitive Debt.

AI coding agents write code faster than ever. But a growing body of research shows developers are losing comprehension of their own codebases. Margaret-Anne Storey calls it "cognitive debt." The METR study found AI makes experienced developers 19% slower. Stack Overflow's trust numbers are dropping. Here's what cognitive debt is, why it matters, and the five patterns to prevent it.

Claude Code Hit $2.5B. Amazon Engineers Can't Use It. Welcome to AI Agent Lock-In.

Claude Code just hit a $2.5 billion run-rate — doubled since January 1st. Yet 1,500 Amazon engineers are fighting for permission to use it, steered toward AWS Kiro instead. This is vendor lock-in repackaged for the AI agent era. Platform-native vs platform-agnostic is the new architectural fault line.